SYNETIC.ai

Research Methodology

How the study was conducted to ensure scientific rigor and eliminate bias.

Independent Validation

The University of South Carolina conducted this research independently. Synetic provided synthetic training data, USC provided real-world validation data, and all testing was performed by university researchers with no financial stake in the outcome.

Test Conditions

Rigorous Testing Protocol

Each model was trained using identical hyperparameters, training duration, and hardware. The only variable was the training data source (synthetic vs. real). This isolated the data quality as the performance differentiator.

Real-World Validation

The critical test: validation was performed exclusively on real-world images captured in actual orchards that models had never seen during training. This proves real-world transferability, not just synthetic-to-synthetic performance.

Why This Methodology Matters

Many synthetic data companies only test on synthetic validation data, which proves nothing about real-world performance.

We tested exclusively on real-world images our models had never encountered, proving the domain gap has been eliminated.

The independent validation by a respected university research institution eliminates any possibility of bias or cherry-picked results.

Why Synthetic Data Outperforms Real-World Data

The performance advantage isn’t magic—it’s systematic superiority across multiple dimensions.

Perfect Label Accuracy

Human labels

~90%

Synetic labels

100%

Human labelers make mistakes due to fatigue, oversight, and judgment calls on edge cases. Our procedural rendering generates mathematically perfect labels—every pixel, every bounding box, every segmentation mask is precisely accurate.

Result: Models learn from ground truth that’s actually true, not approximations with 10% error rate.

Superior Data Diversity

Real-world datasets have inherent biases based on when and where data was collected. Synthetic data provides:

Balanced representation across all conditions

Controlled parameter variations

Unlimited variations without collection constraints

No geographic or temporal bias

Result: Training signal is more diverse and representative of deployment conditions.

Systematic Edge Case Coverage

Real-world data is limited by what you can photograph and what naturally occurs during collection. Synthetic data systematically covers the entire distribution:

All lighting conditions (dawn, noon, dusk, night, overcast, direct sun)

All weather variations (clear, rain, fog, snow, varying intensities)

All occlusion scenarios (partial, full, overlapping objects)

All camera angles and distances

Rare events that occur infrequently in real data

Result: Models see comprehensive training examples, not just common scenarios.

Physics-Based Accuracy

Unlike generative AI (which can hallucinate or create artifacts), our procedural rendering uses physics simulation:

Ray-traced lighting (physically accurate)

Real material properties (accurate reflectance, transparency)

Genuine camera optics simulation

No neural network artifacts or hallucinations

Result: Synthetic images are statistically indistinguishable from real photographs in the feature space.

What You Get

Real-World Approach

Synetic Approach

Time to deployment

Model accuracy

Label quality

Edge case coverage

Edge case coverage

Iteration speed

6-18 months

70-85%

~90% accurate

Limited by collection

Collection-limited

Months per change

2-4 weeks

90-99% (+34%)

100% perfect

Unlimited & systematic

Unlimited generation

Days per change

The Evidence: Synthetic Data Outperforms Real by 34 percent

University-validated. Peer-reviewed. Independently verified.

Not marketing claims—published science.

Authors: Synetic AI with Dr. Ramtin Zand & James Blake Seekings

(University of South Carolina)

Published on: ResearchGate | November 2025

Addressing Common Concerns

We’ve heard every objection to synthetic data. Here’s how the evidence answers each one.

“Domain gap will hurt real-world performance”

Domain gap has been eliminated. This was the central question of the USC study, and it was definitively answered: models trained on 100% synthetic data achieved 34% better performance on real-world validation images they had never seen.

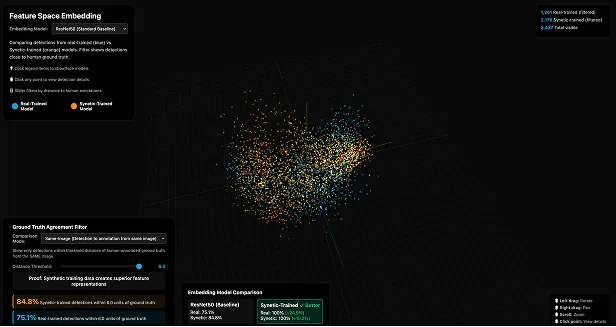

The proof: PCA/TSNE/UMAP analysis of embeddings proves synthetic and real data occupy identical feature space. If domain gap existed, performance would decrease on real data. Instead, it increased by 34%.

The proof: PCA/TSNE/UMAP analysis of embeddings proves synthetic and real data occupy identical feature space. If domain gap existed, performance would decrease on real data. Instead, it increased by 34%.

“Edge cases won’t be adequately covered”

Synthetic data excels at edge cases. Real-world data is limited by what you happen to photograph. Rare events are underrepresented. Synthetic data systematically generates edge cases:

Extreme lighting (very dark, very bright, backlighting)

Heavy occlusion scenarios

Unusual angles and perspectives

Rare weather conditions

Objects at detection boundaries

The proof: Our models detected apples that human labelers missed—edge cases where objects were heavily occluded or at challenging angles.

Extreme lighting (very dark, very bright, backlighting)

Heavy occlusion scenarios

Unusual angles and perspectives

Rare weather conditions

Objects at detection boundaries

The proof: Our models detected apples that human labelers missed—edge cases where objects were heavily occluded or at challenging angles.

“This only works for simple tasks like apple detection”

Apple detection was chosen as the first peer-reviewed proof point specifically because it’s well-understood and could be rigorously validated by university researchers. The principles apply universally to computer vision tasks.

We’ve successfully deployed synthetic data training across:

Defense: Threat detection, surveillance, perimeter security

Manufacturing: Defect detection, assembly verification, QC

Security: Anomaly detection, intrusion detection

Robotics: Navigation, manipulation, object recognition

Logistics: Package tracking, safety monitoring

The proof: We’re actively seeking 10 companies across different industries for validation challenge case studies. Join the program to expand the evidence base.

We’ve successfully deployed synthetic data training across:

Defense: Threat detection, surveillance, perimeter security

Manufacturing: Defect detection, assembly verification, QC

Security: Anomaly detection, intrusion detection

Robotics: Navigation, manipulation, object recognition

Logistics: Package tracking, safety monitoring

The proof: We’re actively seeking 10 companies across different industries for validation challenge case studies. Join the program to expand the evidence base.

“What about generative AI synthetic data like Stable Diffusion?”

Generative AI and procedural rendering are fundamentally different approaches:

Aspect

Image generation

Accuracy

Labels

Artifacts

Control

Validation

Generative AI (SD, Midjourney)

Neural network prediction

Can hallucinate details

Must be generated separately

AI artifacts common

Prompt-based (imprecise)

Limited peer review

Synetic Procedural Rendering

Physics simulation

Mathematically perfect

Perfect labels automatic

No artifacts

Parameter-based (exact)

USC peer-reviewed +34%

Bottom line: Generative AI creates plausible images. We create physically accurate simulations with perfect ground truth.

“How do I know this will work for my specific use case?”

Test it risk-free. We’re so confident in our approach that we offer a 100% money-back performance guarantee. If our synthetic-trained model doesn’t meet or exceed your expectations (or doesn’t outperform your existing real-world trained models), we refund 100%.

Additionally, join our Validation Challenge program at 50% off. We’ll work with you to prove it works for your specific application, and you’ll contribute to expanding the evidence base.

Additionally, join our Validation Challenge program at 50% off. We’ll work with you to prove it works for your specific application, and you’ll contribute to expanding the evidence base.